A recent ICLR 2026 paper proposes a more direct approach to hybrid retrieval: instead of fusing outputs, GQR performs test-time optimization on the query embedding itself. This post breaks down the GQR architecture, the experimental results on the ViDoRe benchmark, and why this approach can be an efficient option for high-performance RAG pipelines.

Guided Query Refinement (GQR) — ICLR 2026 — arXiv | GitHub

Motivation

Hybrid retrieval has become the default strategy in modern retrieval systems, especially in multimodal and long-document settings.

Typical hybrid systems combine:

- Dense retrievers (semantic similarity)

- Sparse retrievers (lexical matching)

- Sometimes vision-based retrievers

However, most systems combine these models at the score level:

- Linear interpolation

- Rank fusion

- Reciprocal Rank Fusion (RRF)

Score fusion combines decisions after retrieval.

GQR modifies the query representation before retrieval.

This shift from output fusion to representation refinement is the core conceptual contribution of the paper.

Core Idea

Given a primary retriever (e.g., dense or multimodal encoder) and a complementary retriever (e.g., sparse BM25), instead of fusing their retrieval results, GQR:

- Uses the complementary retriever to score documents

- Derives a refinement signal from those scores

- Updates the query embedding of the primary retriever

- Performs retrieval again using the refined query

Formally:

- Original query embedding: q

- Refined embedding: q′=q+αΔq

Where ΔqΔq is computed from complementary retrieval signals.

This happens at test time, without retraining the base retriever.

Paper foundations

The paper states explicitly that the query encoder weights are frozen and the query embedding is treated as a learnable parameter. The optimization target is the embedding vector itself, not the query text. Like training a neural network, GQR updates the query’s numerical representation via backpropagation.

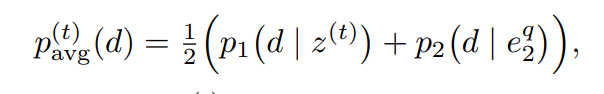

Consensus distribution : The auxiliary retriever yields a distribution p2(d∣e^q_2) over candidates; the primary yields p1(d∣z^{(t)}). Their consensus is:

The primary model adjusts its query embedding z^{(t)} so that p_1 moves toward p_{\mathrm{avg}}. The objective minimizes \mathrm{KL}(p_{\mathrm{avg}} \| p_1) with respect to z ; the gradient step z^{(t+1)} = z^{(t)} — \alpha \nabla_z \mathcal{L} updates only the query vector, not model weights.

Two Retrievers in Hybrid Search

Each retriever encodes the same query and scores documents, but their outputs differ because they use different encoders, modalities, or scoring mechanisms (e.g., dot-product vs MaxSim).

Where they differ

(A) Representation level — Query embeddings $e_1^q$ and $e_2^q$ live in different embedding spaces.

(B) Score level — For each document, similarity scores differ (e.g., retriever 1: 0.78, 0.34, 0.69; retriever 2: 0.62, 0.68, 0.87), so score distributions differ.

© Ranking level — Different scores produce different rankings (e.g., retriever 1: {d1, d3, d2}; retriever 2: {d3, d2, d1}).

Why GQR needs them different

If both retrievers produced identical outputs, there would be no complementary signal and no refinement. GQR works because the auxiliary retriever captures information the primary misses (e.g., primary for visual alignment, auxiliary for lexical match). The disagreement between them carries useful signal.

Typical setup

Primary Auxiliary Vision-centric multimodal encoder Text-only dense retriever Late interaction (MaxSim) Single-vector dot-product Larger index Lightweight index

Important nuance

GQR does not combine both outputs. The auxiliary retriever’s distribution over documents is a teacher signal; the primary query embedding is updated so its distribution moves toward the auxiliary. The final ranking uses only the primary retriever. The auxiliary is guidance only.

Why This Matters

Score fusion assumes:

- Query embedding is fixed and correct

- Errors can be fixed by mixing scores

Dense retrievers often fail because the query embedding:

- Misses lexical cues

- Underrepresents rare tokens

- Is biased toward training distribution

GQR treats complementary retrieval as a form of weak supervision to reshape the query representation.

This reframes hybrid retrieval as a test-time optimization problem.

Multimodal Setting

The paper extends this idea to multimodal retrieval.

In multimodal hybrid systems:

- Vision-based retrievers capture layout and visual semantics

- Text-based sparse retrievers capture lexical precision

Visual retrievers (e.g., ColPali) are strong at layout and visual features but weak at exact lexical matching — specific words, numbers, or rare tokens. When a BM25-style auxiliary retriever signals “this document contains that exact term,” GQR injects that information into the primary model’s query vector, improving retrieval accuracy without changing the encoder.

Naive fusion struggles when the modalities disagree. GQR enables:

- Text-based signals to guide visual query embeddings

- Or vice versa

This is useful in:

- Visually rich documents

- Long PDFs

- Chart/table-heavy pages

- Multimodal QA benchmarks

Test-Time Optimization Perspective

GQR reframes retrieval as a query adaptation problem at inference time.

It is related to:

- Test-time adaptation

- Meta-learning

- Query expansion

- Prompt refinement

But differs in key ways:

- It does not generate synthetic text (unlike HyDE); it modifies the query embedding via backpropagation, not LLM text generation

- It does not retrain models; encoder weights stay frozen

- It operates directly in embedding space

- It uses real complementary scores

Algorithmic Outline

High-level procedure:

- Encode query using primary retriever → q

- Run complementary retriever → get document scores

- Construct refinement direction Δq

- Update query embedding

- Re-run primary retriever with refined query

Computational overhead is modest (one extra retrieval step).

Code

The official implementation (GitHub) provides a clean PyTorch implementation of GQR and baseline fusion methods.

Fusion baselines (post-hoc)

Baseline methods in fusion_methods.py fuse rankings or scores after retrieval. The query embedding is never modified:

def sim_score_fusion(r1, r2, normal_func=normalize_min_max, alpha=0.5):

# r1, r2: retrieval results {query: [(doc_id, score), ...]}

for doc in union:

agg_score = alpha * get_score(doc, curr_r1) + (1-alpha) * get_score(doc, curr_r2)

raw_score_averages[doc] = agg_score

# Returns fused ranking — query embedding unchangedScore fusion normalizes outputs from each retriever (min-max or softmax) and takes a weighted average. The retriever models and query representations stay fixed.

Retriever

The Retriever class in retriever.py loads precomputed embeddings and computes similarity. It supports single-vector (dot product) and multi-vector (MaxSim, for ColPali-style late interaction):

def get_topk(d_embs, q_embs, k, is_multi=False):

if is_multi:

similarity = score_multi_vector(q_embs, d_embs, batch_size=8)

else:

similarity = torch.matmul(q_embs, d_embs.T)

top_vals, top_idx = torch.topk(similarity, k=k, dim=-1)

return top_vals, top_idx

def compute_scores(self, q, d):

if self.is_multi:

similarity = score_multi_vector(q, d, batch_size=16)

similarity = similarity / q.size(1)

else:

similarity = torch.matmul(q, d.T)

return similarityFor multi-vector embeddings, MaxSim computes ∑imaxjqiTdj∑imaxjqiTdj. The implementation uses pad_sequence for variable-length token sequences and a 4D einsum (bnd,csd->bcns) to compute token-level similarity in batches; this supports ColPali-style late interaction. Importantly, MaxSim is differentiable: the gradient flows through this scoring function to the query tokens, so GQR can refine each token embedding based on the auxiliary retriever's signal. This is crucial for multimodal late-interaction models. Single-vector uses L2-normalized dot product. get_topk builds the candidate pool; compute_scores is called inside the optimization loop to obtain p1p1.

Loss functions

The code supports multiple loss functions. KL divergence is the default; Jensen-Shannon divergence (js_divergence) is a symmetric alternative that can behave more stably and improve optimization robustness:

def kl_divergence(p, q, eps=1e-8):

return torch.sum(p * torch.log((p + eps) / (q + eps)))

def js_divergence(p, q, eps=1e-8):

m = .5 * (p + q)

return .5 * (torch.sum(p * torch.log((p + eps) / (m + eps))) + torch.sum(q * torch.log((q + eps) / (m + eps))))GQR core loop (iterative optimization)

GQR is not a one-shot formula; it performs test-time optimization. The query embedding is declared as a parameter and fine-tuned over n_steps iterations (typically via Adam) so the primary retriever's document distribution moves toward consensus with the auxiliary. The loss is KL or JS divergence. The refined query can then be used for full-index search (higher recall) or candidate re-ranking (lower latency). From query_optimizations.py:

# Query made trainable

qs = [q[i].unsqueeze(0).clone().detach().requires_grad_(True) for i in range(Q)]

opt = optimizer(qs, lr=lr) # Adam, etc.

# Candidate pool: primary retriever's top-k

top_k = get_topk(d, q, k, is_multi=main_model.is_multi)[1]

set1 = d[top_k[q_id]] # docs for this query

# Sparse (BM25): slice_sparse_coo_tensor for efficient candidate-pool score extraction

if f_d.is_sparse:

set2 = slice_sparse_coo_tensor(f_d, slice_indices=top_k[q_id]).to(device)

else:

set2 = f_d[top_k[q_id]].to(device)

# Softmax distributions (paper Eq. 8-9)

d2 = F.softmax(feedback_model.compute_scores(q2, set2) / T, -1) # p_2 from complementary

d1 = F.softmax(main_model.compute_scores(q1, set1) / T, -1) # p_1 from primary

# Consensus: p_avg = (1-α) p_1 + α p_2

mixture_d = (1 - mixture_alpha) * d1 + mixture_alpha * d2

# Iterative optimization: n_steps gradient steps

for step in range(n_steps):

d1 = F.softmax(main_model.compute_scores(q1, set1) / T, -1)

loss = loss_func(mixture_d, d1) # KL or JS

opt.zero_grad(set_to_none=True)

loss.backward(retain_graph=True)

opt.step()When the auxiliary retriever is sparse (e.g., BM25), slice_sparse_coo_tensor efficiently slices the sparse document matrix to the candidate pool, integrating dense and sparse signals in a unified tensor workflow without full dense conversion.

With Search vs No Search

Two execution modes control the trade-off between latency and recall:

- With Search (optimize_queries_with_search): After refinement, run retrieval again over the full index with the refined query. Higher recall, higher latency.

- No Search (optimize_queries_no_search): Keep the candidate pool from the optimization phase; only re-score within that pool. Faster, but recall is limited to the initial candidates.

Selection depends on whether full search or candidate re-ranking is acceptable for the application.

Pseudocode summary

q = encode(query_text)

for t in range(n_steps):

primary_scores = primary.compute_scores(q, candidates)

guidance_scores = complementary.compute_scores(query_emb, candidates)

p1 = softmax(primary_scores / T)

p2 = softmax(guidance_scores / T)

p_avg = (1 - alpha) * p1 + alpha * p2

loss = KL(p_avg, p1)

q = q - lr * grad(loss, q)

final_results = primary.search(q)Fusion vs GQR

Baseline fusion GQR Query Fixed Refined Aggregation (primary scores + guidance scores) → rerank guidance → update q → re-retrieve Level Score or rank Representation

GQR keeps the primary retriever fixed and absorbs complementary signals by refining the query representation, which also works with multi-vector retrievers (e.g., MaxSim).

Empirical Findings

The paper evaluates primarily on ViDoRe (Visual Document Retrieval), which includes visually rich documents such as charts, infographics, and complex layouts. With ColPali (vision-centric multi-vector) as the primary retriever and BM25 (sparse text-based) as the auxiliary, GQR consistently outperforms standalone ColPali in nDCG@5 and Recall@5. The largest gains appear on subsets where fine-grained text matters (e.g., ChartQA, InfographicVQA), where the auxiliary retriever supplies lexical signals the vision model tends to miss.

GQR vs traditional fusion

Unlike RRF, which only reorders documents already in the top-k, GQR refines the query representation. In “With Search” mode, the refined query can surface relevant documents that were not in the initial retrieval. Compared with static score fusion (linear interpolation), GQR is more robust across datasets; score fusion often needs manual tuning of weights per domain, whereas GQR adapts via test-time optimization without retraining. In out-of-distribution settings where the primary model was not trained on a given document type (e.g., specialized financial charts), the auxiliary retriever’s signal acts as a correction, improving accuracy without model retraining.

With Search vs No Search

- With Search: Re-retrieval over the full index with the refined query. Best for recall improvement.

- No Search: Re-scoring only within the initial top-k. Faster and useful for improving precision within the candidate pool.

Modality synergy

Experiments show complementary effects between vision and text:

- Lexical guidance for vision: BM25 provides specific terms (e.g., dates, IDs in tables) that visual encoders tend to blur, helping ColPali focus on fine-grained text.

- Visual guidance for text: When a dense text retriever is primary and a vision model is auxiliary, the vision signal helps the text model capture layout and visual semantics it would otherwise ignore.

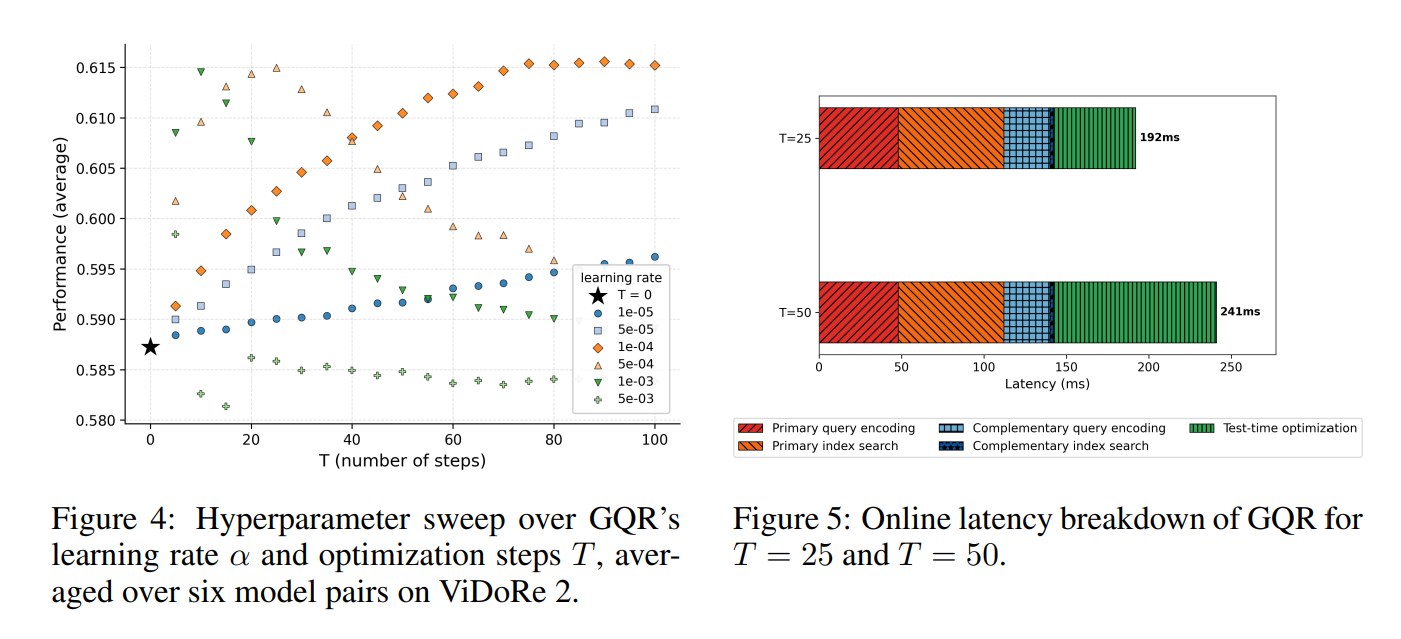

Optimization efficiency

Most performance gains occur within about 10–20 optimization steps; further iterations show diminishing returns. GQR adds inference-time cost but remains faster than LLM-based query rewriting (e.g., HyDE) because it operates directly on embeddings via gradient descent.

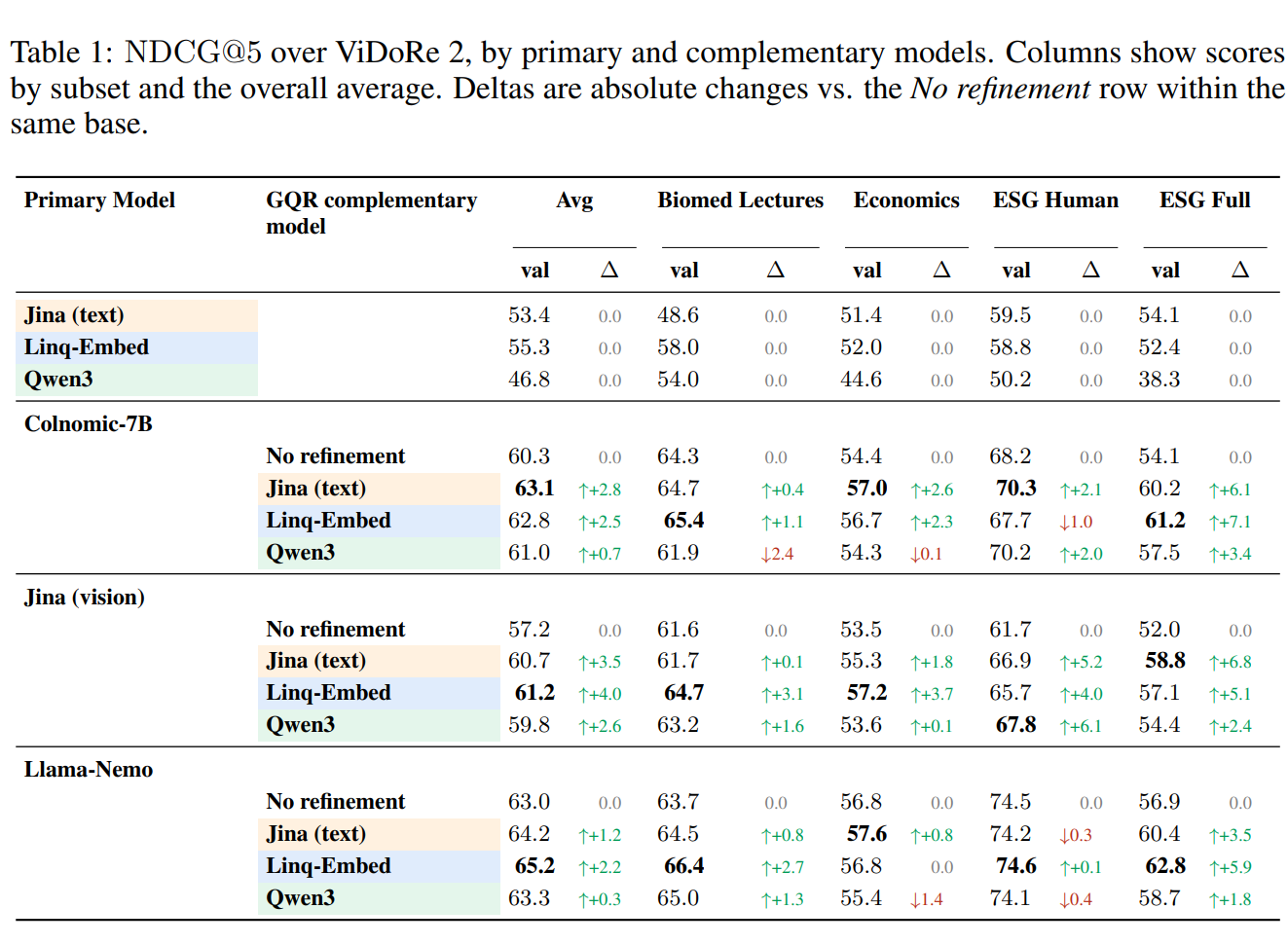

Retrieval performance

The paper reports NDCG@5 by primary model, complementary model, and subset (Biomed Lectures, Economics, ESG Human, ESG Full). Deltas are relative to the “No refinement” baseline for each primary.

Primary models: Colnomic-7B (vision), Jina (vision), Llama-Nemo (vision); Jina (text) and Linq-Embed also appear as text primaries. Complementary models: Jina (text), Linq-Embed, Qwen3.

Findings: Jina (text) and Linq-Embed generally yield strong gains as complements; Qwen3 is more mixed and sometimes lowers scores on some subsets. ESG Full often shows the largest delta improvements. Linq-Embed frequently attains the best average across subsets for Colnomic-7B, Jina (vision), and Llama-Nemo. Text-as-complement (e.g., Jina text guiding Colnomic or Jina vision) adds lexical signal; vision-as-complement adds layout and visual semantics for text primaries.

Indicative comparison

Setup Query updated? Relative nDCG@5 (ViDoRe) ColPali (standalone) No Baseline ColPali + BM25 (RRF) No Moderate gain GQR (ColPali, BM25 auxiliary) Yes Highest

Comparison with Traditional Hybrid

Method Fusion Level Query Modified Requires Retraining Linear interpolation Score No No RRF Rank No No Late fusion Score No No GQR Representation Yes No

Conceptual Takeaway

The main contribution is the reframing:

Hybrid retrieval can adapt query representations instead of mixing scores.

Instead of post-hoc score or rank fusion, the paper argues that retrievers can reach consensus on document relevance and refine the query embedding accordingly. This is supported by the consensus distribution, the KL-based optimization, and empirical gains on ViDoRe.

Thank you!

References

- Official paper: Guided Query Refinement: Multimodal Hybrid Retrieval with Test-Time Optimization (ICLR 2026).

- Official code: IBM/test-time-hybrid-retrieval — Full PyTorch implementation of GQR, including the optimization loops and multimodal retriever benchmarks.

- ColPali: Efficient Document Retrieval with Vision Language Models (ICLR 2025)

- Dataset: ViDoRe Benchmark V2: Raising the Bar for Visual Retrieval

댓글